What Exactly Is HappyHorse-1.0? From Mysterious Dark Horse to Official Alibaba Confirmation – The Full Reveal

In early April 2026, a previously unknown AI model named HappyHorse-1.0 appeared without warning — and immediately disrupted the entire AI video generation landscape.

No official announcement.

No marketing campaign.

No company attribution.

Yet within days, it climbed to the top of the Artificial Analysis leaderboards, outperforming leading models like Seedance 2.0 and Kling 3.0 in blind user evaluations.

This article provides a complete breakdown:

- What HappyHorse-1.0 actually is

- Why it ranked #1 so quickly

- Its core features and capabilities

- The official Alibaba confirmation

- And the real user pain points it solves (especially vs Seedance 2.0)

What Is HappyHorse-1.0? (Complete Overview)

HappyHorse-1.0 is a next-generation AI video generation model that transforms text prompts or reference images into cinematic-quality videos.

It introduces a major shift in architecture:

👉 A unified multimodal generation system where video and audio are produced together in a single pipeline.

Key capabilities include:

- Native 1080p HD video generation

- Integrated synchronized audio output

- Support for Text-to-Video (T2V) and Image-to-Video (I2V)

- Advanced motion synthesis and temporal consistency

- High prompt accuracy and semantic understanding

Unlike earlier tools, it is not just a generator — it is a complete video production system in one model

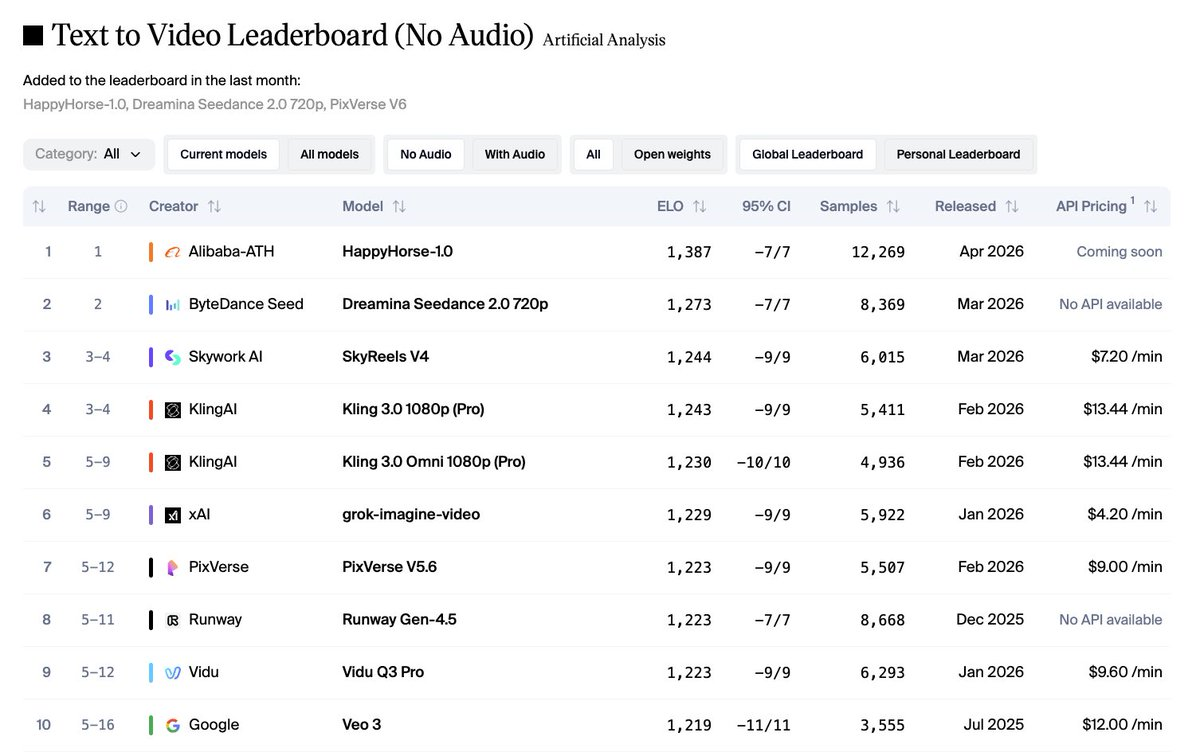

HappyHorse-1.0 Ranking: #1 on Artificial Analysis Leaderboards

One of the most important reasons behind the explosive attention is its verified ranking performance.

On the Artificial Analysis Video Arena (leaderboards):

- 🥇 #1 in Text-to-Video (no audio)

- 🥇 #1 in Image-to-Video (no audio)

- 🥈 Top-tier performance in audio-enabled categories (near #1)

These rankings are not based on marketing claims.

They are based on:

- Blind A/B testing

- Real user voting

- Large-scale comparison across models

This means users consistently preferred HappyHorse outputs over:

- Seedance 2.0

- Kling 3.0

- PixVerse V6

👉 This is a critical signal:

The model is not just technically strong — it is visually and experientially preferred by users.

Why HappyHorse-1.0 Became #1 So Fast

Its rapid rise is directly tied to solving the biggest limitations of existing AI video tools.

1. True Audio + Video Generation in One Pass

Most competing models still rely on:

- Video generation

- Audio added afterward

This often leads to mismatch and unnatural results.

HappyHorse-1.0 generates:

- Dialogue

- Background sound

- Environmental audio

- Lip-sync

👉 All in one step, resulting in far more coherent outputs.

2. Superior Motion and Temporal Consistency

Traditional problems in AI video include:

- Flickering frames

- Character inconsistency

- Unrealistic motion

HappyHorse improves:

- Frame stability

- Character continuity

- Natural movement

3. Strong Prompt Adherence

Instead of loosely interpreting prompts, HappyHorse:

- Follows instructions more precisely

- Maintains narrative structure

- Handles complex scenes more reliably

4. Multi-Shot Storytelling

Many AI tools are limited to single-shot clips.

HappyHorse supports:

- Scene transitions

- Consistent characters

- Narrative continuity

This enables full short-form storytelling, not just isolated visuals.

Core Features of HappyHorse-1.0

Text-to-Video and Image-to-Video

Supports both:

- Text → Video

- Image → Video

Within one unified system.

Native 1080p Output

- High-definition video generation

- Production-ready visual quality

Integrated Audio Generation

One of the standout capabilities:

- Audio is generated together with video

- Includes speech, ambient sound, and effects

- Eliminates the need for separate audio tools

Fast Generation Speed

- Near real-time generation online

- Efficient local inference on high-end hardware

This enables rapid iteration and creative testing.

Multiple Visual Styles

Supports a wide range of visual styles, including:

- Photorealistic

- Artistic

- Stylized cinematic outputs

The Real User Pain Points in AI Video (And Why HappyHorse Wins)

To understand its impact, it is critical to examine real frustrations — especially with models like Seedance 2.0.

1. Long Queue Times (Critical Bottleneck)

A major issue with Seedance 2.0:

- Long waiting queues

- Heavy congestion during peak usage

- Delayed generation times

For creators, this leads to:

- Interrupted workflow

- Slower content production

- Reduced efficiency

👉 HappyHorse focuses on fast generation, significantly reducing waiting friction.

2. High Cost Per Generation

Seedance 2.0 is often associated with:

- Higher usage costs

- Expensive scaling for frequent generation

- Limited experimentation due to pricing

This creates barriers for:

- Indie creators

- Small teams

- High-volume content production

👉 HappyHorse lowers this barrier by enabling:

- More flexible usage

- Better scalability

- Cost-efficient experimentation

3. Fragmented Workflow

Traditional AI video pipelines require:

- Generate video

- Generate audio

- Sync lip movement

- Edit externally

This is inefficient and time-consuming.

👉 HappyHorse simplifies everything:

- One prompt

- One generation step

- One output

4. Inconsistent Output Quality

Common issues in other models:

- Motion instability

- Weak scene continuity

- Audio-video mismatch

👉 HappyHorse improves:

- Visual consistency

- Narrative coherence

- Audio alignment

5. Limited Creative Control

Users often face:

- Weak prompt interpretation

- Lack of stylistic consistency

- Unpredictable outputs

👉 HappyHorse offers:

- Strong semantic understanding

- More controllable generation

- Higher reliability

The Mystery Phase: Why Everyone Was Confused

Before official confirmation, HappyHorse-1.0 triggered massive speculation.

Reasons:

- No branding or attribution

- Immediate top ranking

- Strong performance across tasks

Theories ranged from:

- Google internal model

- ByteDance experiment

- Breakthrough open-source system

The anonymous release created:

👉 Curiosity

👉 Viral attention

👉 Trust through blind testing

Official Confirmation: Alibaba Behind HappyHorse-1.0

On April 10, 2026, the mystery was resolved.

HappyHorse-1.0 was confirmed to be developed by Alibaba Group, under:

- Taotian Group

- ATH-AI Innovation Division

Why This Confirmation Matters

This signals:

- Major tech companies are accelerating AI video development

- Competition is entering a new stage

- Enterprise-grade models are becoming more accessible

It also confirms:

👉 The performance is backed by serious infrastructure

👉 The model is part of a broader strategic initiative

Technical Positioning

HappyHorse-1.0 is built on a large-scale Transformer architecture designed for multimodal generation.

Key strengths:

- Unified audio-video modeling

- Strong temporal coherence

- High semantic accuracy

- Multi-scene generation capability

This allows:

- More realistic motion

- Better storytelling

- Higher consistency

Who Should Use HappyHorse-1.0

Content Creators

- Short-form video creators

- Social media producers

Marketers

- Advertising content

- Product videos

Educators

- Visual explanations

- Training materials

Developers

- API integrations

- AI-powered applications

Real-World Use Cases

Social Media Content

- Rapid video creation

- High engagement visuals

Marketing Campaigns

- Scalable ad production

- Faster iteration

Storytelling

- Multi-shot narratives

- Character-driven content

Image Animation

- Turning static assets into dynamic visuals

The Future of HappyHorse-1.0

With Alibaba backing the project, several developments are expected:

API Expansion

- Developer-friendly integrations

- Broader ecosystem adoption

Performance Improvements

- Faster generation speeds

- More efficient inference

Cost Optimization

- More accessible pricing

- Better scalability

Quality Enhancements

- Improved realism

- Stronger consistency

The Bottom Line

HappyHorse-1.0 is not just another AI video model.

It is a #1 ranked system on the Artificial Analysis leaderboards, validated by real users — not marketing claims.

It directly addresses critical pain points:

- ❌ Long queue times

- ❌ High costs

- ❌ Fragmented workflows

- ❌ Inconsistent results

And replaces them with:

- ✅ Fast generation

- ✅ Unified audio + video output

- ✅ Strong prompt control

- ✅ Scalable production

Most importantly:

It marks the transition from experimental AI video tools to production-ready systems.

With Alibaba officially entering the space, the competition is no longer just about quality.

👉 It is about speed, cost, and usability at scale.

And that is where the next phase of AI video begins.